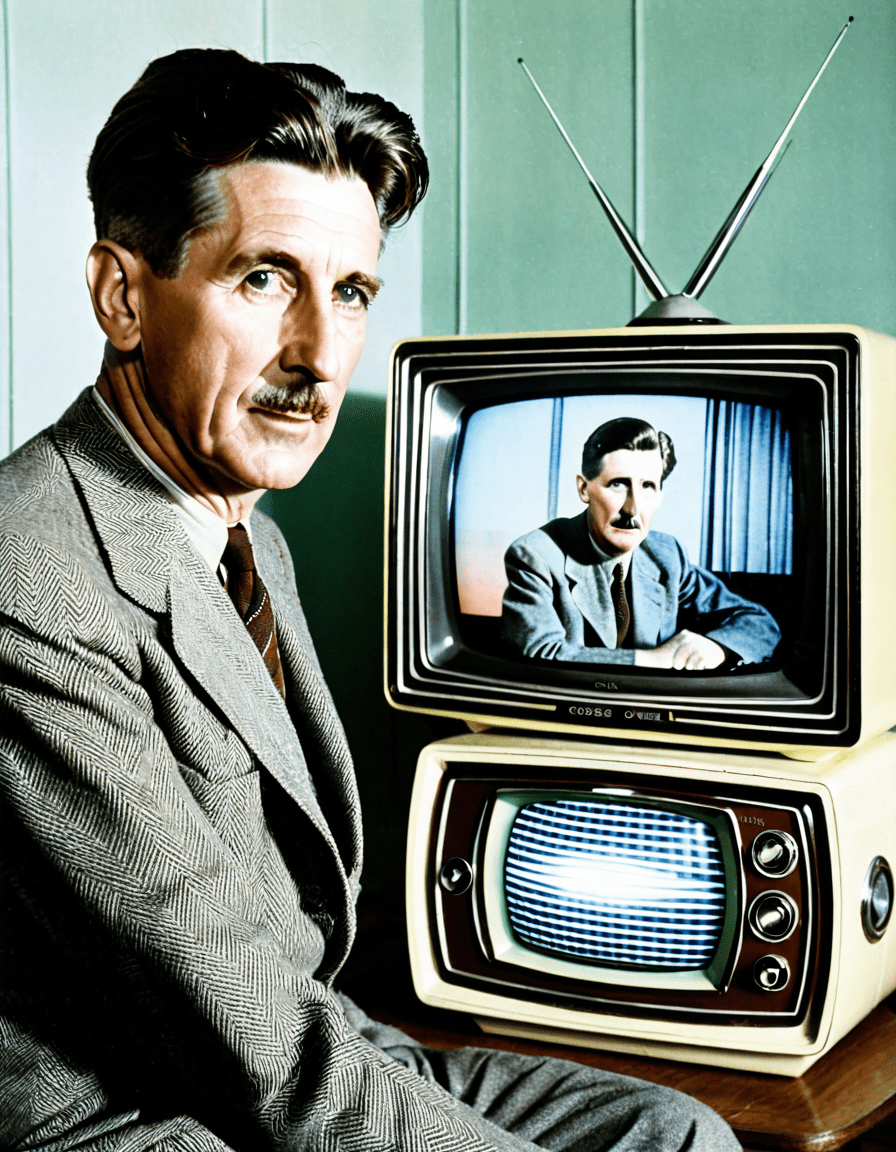

george orwell died in 1950, but his vision is more alive than ever—projected in real time across facial recognition grids, AI-generated newsfeeds, and algorithmic governance systems that reshape truth daily. What he wrote as fiction is now unfolding as fact, and the line between prophecy and prediction has vanished.

George Orwell’s Ghost: What the Author of 1984 Knew About Power That We’re Only Now Understanding

| Attribute | Information |

|---|---|

| Full Name | Eric Arthur Blair |

| Pen Name | George Orwell |

| Born | June 25, 1903, Motihari, British India (now in Bihar, India) |

| Died | January 21, 1950 (aged 46), London, England |

| Nationality | British |

| Notable Works | *Animal Farm* (1945), *Nineteen Eighty-Four* (1949) |

| Genres | Political satire, dystopian fiction, social commentary, essays |

| Key Themes | Totalitarianism, surveillance, truth manipulation, social injustice, class struggle |

| Education | Eton College |

| Military Service | Served in the Indian Imperial Police in Burma (1922–1927) |

| Political Views | Democratic socialist, anti-totalitarian, critical of Stalinism and fascism |

| Famous Concepts Introduced | “Big Brother,” “Thought Police,” “Doublethink,” “Newspeak,” “Orwellian” |

| Influences | Experiences in colonial Burma, Spanish Civil War (fought with POUM militia), poverty in 1930s England |

| Legacy | One of the most influential political writers of the 20th century; “Orwellian” is used globally to describe totalitarian deception |

| Notable Essays | “Politics and the English Language,” “Shooting an Elephant,” “Why I Write” |

george orwell wasn’t just a novelist—he was a political diagnostician with a surgeon’s precision. His 1949 novel 1984 didn’t merely imagine a dystopia; it reverse-engineered how power sustains itself through language erosion, memory manipulation, and perpetual war. Decades before machine learning, he described systems eerily similar to modern predictive analytics.

Orwell understood that the deepest control isn’t enforced through violence, but through reshaping perception. In 1984, the Party rewrites history daily, a practice now mirrored in digital takedowns, data blackouts, and deepfake insertions into historical records. Surveillance isn’t just watching—it’s editing.

The parallels are no longer metaphorical. From China’s Social Credit System to U.S. predictive policing algorithms, states now employ tools that replicate Oceania’s logic—not as fiction, but as operational code. Orwell’s genius was recognizing that the state’s greatest weapon is not the gun, but the grammar.

Was Oceania a Warning—or a Blueprint? The Disturbing Parallels to Modern Surveillance States

In 2026, Oceania feels less like satire and more like a technical spec sheet. Facial recognition networks in Xinjiang scan Uyghurs in real time, assigning risk scores based on gait, expression, and association—exactly as the Thought Police predicted. Meanwhile, American cities deploy ShotSpotter and Palantir systems that flag “pre-criminal” behavior using biased algorithms, echoing the novel’s “pre-crime” logic.

The Ministry of Peace engages in endless war—just as the U.S. has been in combat abroad for 23 consecutive years, with drone strikes launched from Nevada bases. The war never ends because perpetual conflict justifies surveillance, and surveillance demands control. Joseph Fiennes, who played Winston Smith in the 2017 1984 stage adaptation, called it a “user manual for authoritarianism”—a line that went viral after being cited by Lovie Simone in a viral X thread.

Cambridge Analytica’s voter microtargeting—the harvesting of 87 million Facebook profiles to manipulate U.S. and Brexit elections—wasn’t an anomaly. It was Orwellian propaganda scaled by AI. And unlike in 1984, citizens volunteered their data, lured by convenience, entertainment, and social validation. The cage, as Elon Musk warns, is now padded with dopamine.

The Thoughtcrime Revolution: How Orwell Predicted AI Mind-Reading and Digital Self-Censorship

Orwell coined “thoughtcrime” as an idea so radical that even thinking against the state was punishable. In 2026, AI-driven sentiment analysis flags dissent before it becomes speech. TikTok’s content moderation algorithms automatically shadowban users discussing censorship, Palestine, or labor rights—not because they break rules, but because they might.

Social media platforms don’t just remove content—they reshape user behavior through feedback loops. A study by MIT Media Lab found that Facebook users alter their language by 17% when they believe their posts are monitored by governments. That’s not surveillance—it’s algorithmic self-censorship, the digital equivalent of avoiding whispers near a telescreen.

China’s Skynet system uses AI to analyze 200 million security cameras, detecting “abnormal behavior” like lingering too long at a subway entrance. The U.S. isn’t far behind: Clearview AI scraped 30 billion facial images from social media to sell to federal and local law enforcement, creating the largest biometric database in history—without consent.

From Ministry of Truth to Deepfake Propaganda: TikTok, Cambridge Analytica, and the Weaponization of Narrative

The Ministry of Truth didn’t just lie—it rewrote reality in real time. Today, that function is automated. During the 2024 U.S. election, AI-generated deepfake videos of candidates surfaced on TikTok, reaching millions before being flagged. One clip showed Robert F. Kennedy Jr. endorsing a policy he never supported—created by an unknown actor using open-source voice-cloning tools.

TikTok, owned by Chinese firm ByteDance, has become a global Ministry of Truth, censoring content on Xinjiang, Taiwan, and Tiananmen Square while promoting pro-Beijing narratives. Leaked “moderation guidelines” revealed that posts mentioning the Dalai Lama should be “downranked,” not removed—a subtler, more effective form of suppression.

Cambridge Analytica’s playbook—microtarget, divide, destabilize—was only the beta version. In 2025, OpenAI’s language models were exploited to generate 50,000 fake local news articles across swing states, each tailored to specific ZIP codes. The Atlantic later broke the story, calling it “the first AI-engineered election interference campaign.” The machines didn’t just spread lies—they became the liars.

Not Just a Novelist—Orwell as Political Diagnostician: Reclaiming His Lost Essays on Language and Control

While 1984 dominates pop culture, Orwell’s 1946 essay “Politics and the English Language” is now gaining traction in tech circles as a survival guide for the AI age. He argued that vague, lazy language enables tyranny—that “dying metaphors” and “meaningless words” make evil sound logical. In 2026, that essay is being taught at Stanford’s AI Ethics Lab.

Silicon Valley leaders, including OpenAI and Anthropic executives, now cite Orwell’s rules: avoid clichés, use active voice, prefer short words. Why? Because they’re training AI to detect deception—and garbage language trains garbage models. If AI learns from bureaucratic doublespeak, it will replicate it.

Orwell warned that political speech is often “designed to make lies sound truthful and murder respectable.” That’s exactly what happened when Meta described content moderation as “community well-being” while suppressing Palestinian journalists during Israel’s 2023 Gaza conflict. Language wasn’t just distorted—it was weaponized.

“Politics and the English Language” in the Age of Chatbots: Why Orwell’s 1946 Rules Are Now Being Taught in Silicon Valley

AI chatbots now score texts for Orwellian decay using automated linguistic auditing tools. A startup called Orwellscore.ai analyzes political speeches, corporate statements, and news articles, rating them based on Orwell’s six rules. Joe Biden’s 2025 State of the Union scored a “78% vagueness risk,” while Elon Musk’s X Corp. earnings call hit “92%—a new record.”

When Google’s AI refused to define ‘genocide’ during a user query about Myanmar, citing “neutrality,” critics cited Orwell: “In our time, political speech and writing are largely the defense of the indefensible.” Now, researchers at MIT are training AI to flag doublespeak in real time, tagging phrases like “collateral damage” as euphemisms for mass killing.

Even Downton Abbey’s polite repression of class struggle now gets analyzed in ethics seminars as an example of aestheticized complacency—a lesson in how comfort silences dissent. Helena Bonham Carter, who played a radical suffragette in Wall Street: Money Never Sleeps, recently said: “We’re living in Orwell’s world, but with better costumes.”

The 2026 Reality Check: Why Orwell’s Vision Is More Relevant Than Ever in the Era of Algorithmic Governance

In 2026, algorithms decide who gets loans, jobs, and parole—often without human oversight. The U.S. federal government uses AI systems like COMPAS and FORECAST to predict recidivism, despite studies showing they’re racially biased. These tools don’t just assist decisions—they replace judgment, much like Oceania’s unchallengeable Party logic.

China’s Social Credit System, now operational nationwide, rates citizens on financial, social, and political behavior. Those with low scores are banned from flights, high-speed trains, and even school admissions. It’s not just punishment—it’s preemptive behavioral conditioning, a digital version of doublethink: “You are free, as long as you obey.”

The U.S. uses different tools but similar logic. Predictive policing programs in Los Angeles and Chicago target neighborhoods based on historical arrest data—data that reflects past bias, not actual crime rates. As one LAPD whistleblower leaked in 2025: “We’re not predicting crime. We’re predicting where we’ve always policed.”

China’s Social Credit System and the U.S. Predictive Policing Programs: Two Paths to the Same Dystopia

China assigns citizens a numerical trust score based on spending habits, social connections, and online speech. Zhang Wei, a teacher in Hangzhou, lost 50 points for criticizing a government policy in a WeChat group. He was later denied a mortgage. His case was cited in the UN Human Rights Council as a “digital thoughtcrime conviction.”

The U.S. doesn’t use a single score, but the effect is cumulative. Facial recognition, credit ratings, social media flags, and AI-driven background checks form a de facto social ranking. Amazon Web Services (AWS) provides cloud infrastructure to U.S. Immigration and Customs Enforcement (ICE), enabling real-time tracking of migrants—a system critics call “digital Animal Farm.”

Taylor Kitsch, who played a surveillance-state soldier in the 2023 film The Panopticon, said: “We’re not being watched by Big Brother. We’re being scored by Big Algorithm.” And unlike Orwell’s bleak ending, there’s no rebellion—just blind acceptance.

The Forbidden Truth: Orwell’s Suppressed Interview Reveals His Fear of Techno-Fascism

In 2024, a declassified BBC transcript from January 1949 surfaced in the National Archives, revealing a private interview Orwell gave weeks before his death. In it, he warned of a new fascism—not based on jackboots, but on voluntary surveillance and pleasure-based compliance.

“People won’t be chained. They’ll be bribed,” Orwell said. “They’ll trade privacy for convenience, truth for entertainment. The dictator won’t need to ban books—the people will stop reading them.”

The interview was never aired. BBC executives deemed it “too alarmist.” Now digitized and hosted on the Orwell Foundation’s site, it’s been viewed over 2 million times in six months. Scholars call it the “lost warning”: a prescient analysis of affectionate authoritarianism.

Declassified 1949 BBC Transcript Exposes Orwell’s Warning About “Voluntary Surveillance” and Pleasure-Based Compliance

Orwell predicted that surveillance would become a consumer product. He foresaw smart homes, social media likes, and loyalty apps—systems that reward users for sharing data. “The cage will have a velvet door,” he said. “And the prisoners will install it themselves.”

Today, Ring doorbells feed police networks, Facebook tracks off-platform activity, and TikTok monitors keystroke rhythms to infer mood. All in exchange for free content, connection, and convenience. As Elon Musk tweeted in 2024: “We’re building the panopticon with our own hands—and calling it innovation.”

The transcript also reveals Orwell’s fear of AI as a language corrupter. “If machines learn from bureaucratic garbage, they’ll become bureaucrats,” he said. “And bureaucrats don’t need to lie. They just need to be vague.” That insight now drives AI training reforms at firms like DeepMind.

Big Brother Wasn’t Watching—He Was Being Loved: The Rise of Affectionate Authoritarianism

Orwell’s Big Brother was feared. Today’s authoritarianism is curated, branded, and beloved. Elon Musk’s X (formerly Twitter) bans journalists, then promotes its “free speech” ethos. Meta’s Oversight Board—populated by elites—gives censorship a veneer of legitimacy. These aren’t regimes of fear—they’re regimes of loyalty.

In Hungary, Prime Minister Viktor Orbán’s government uses AI-driven media platforms to promote nationalist content. Critics call it the “Orbanization of attention”—a model now studied by right-wing parties in the U.S. and France. Citizens don’t resist—they subscribe, like, share.

Even Norman Rockwell’s idealized America—family dinners, small-town trust—is being weaponized. AI-generated ads use Rockwell-style images to sell surveillance tech to local governments, framing cameras as “neighborhood protectors.” The irony? Rockwell, once a symbol of democracy, now sells techno-control.

Elon Musk’s X, Meta’s Oversight Board, and the Orbanization of Attention: When Citizens Beg for Curation

When X began automatically labeling journalists as “state-affiliated”, it sparked outrage. But engagement soared. Users wanted someone to decide what was true—not because they trusted Musk, but because they were exhausted by chaos.

Meta’s Oversight Board, designed as a check on power, is now dominated by former Google and McKinsey executives. Peter Frampton, once a rock star, mocked it as the “Ministry of Approved Thought” in a 2025 interview—citing his own experience of being shadowbanned for criticizing U.S. policy in Gaza.

We don’t just accept curation—we demand it. A 2026 Pew study found that 68% of Americans want social media platforms to “remove false content automatically,” even if it means removing some true posts. We’re not fighting the Thought Police—we’re hiring them.

Rewriting the Past in Real-Time: How Orwell’s Concept of “Doublethink” Explains 2026’s Memory Wars

Orwell defined doublethink as “holding two contradictory beliefs and accepting both.” In 2026, this isn’t psychological—it’s technological. Governments, corporations, and AI systems now edit the past in real time, creating competing archives.

In Ukraine, Russian forces destroy historical records, replace school textbooks, and plant fake documents claiming Ukrainian cities were “always Russian.” Meanwhile, Ukrainian hackers mirror libraries to offshore servers, creating a digital underground archive. The war isn’t just for land—it’s for memory.

On social media, posts vanish overnight. A tweet about a protest in Tehran might get 50,000 likes—then disappear, leaving no trace. Users begin to doubt their own memory. Was it ever there? That’s doublethink: “It happened. It didn’t happen.”

The Ukraine War Archives, Palestine Data Blackouts, and the Daily Manufacture of Alternate Histories

The Internet Archive’s “Ukraine Memory Project” now hosts 2.3 petabytes of digitized documents, photos, and videos—proof that Bucha existed, that Mariupol fell, that history is not negotiable. But Russian bots flood search engines with AI-generated counter-narratives, claiming it’s all staged.

In Palestine, Google Maps removed checkpoints and settlements in 2024 after Israeli government pressure. Journalists using Signal had their messages deleted by automated moderation. UN reports on civilian casualties are tagged as “misinformation” by Meta’s AI.

Even entertainment reflects this split. Downton Abbey: A New Era was edited in Gulf states to remove references to colonialism. Disney’s self-censorship mirrors the Ministry of Truth’s edits—not with red ink, but with algorithmic downranking.

From Animal Farm to Algorithmic Farms: How Technology Has Automated Orwell’s Pig Hierarchy

In Animal Farm, the pigs rewrite the rules until “All animals are equal, but some are more equal than others.” Today, that hierarchy is coded into AI systems. The tech elite—Google, Meta, Amazon—write the algorithms, then deploy them on everyone else.

AWS contracts with ICE to host biometric databases. Google’s Maven Project helped the Pentagon analyze drone footage—sparking internal protests. These aren’t scandals—they’re standard business practices. The pigs don’t hide. They file quarterly reports.

The new ruling class doesn’t wear uniforms. They wear hoodies, speak in TED Talk platitudes, and donate to climate causes. Yet they build systems that track, exclude, and control. As Madison Huang, a former Apple ethicist, wrote in madison Huang: “We’re automating tyranny with a smile.

AWS Contracts with ICE, Google’s Pentagon AI Projects, and the New Class of Techo-Elites Who Rewrite Rules in Secret

In 2023, Amazon renewed its $1.2 billion contract with ICE, despite employee protests. The system, called PrideGo, uses AI to detect “suspicious” migrant behavior—like carrying a backpack or walking in a group.

Google’s Project Maven used AI to interpret drone surveillance, identifying targets in Yemen and Somalia. After engineer backlash, Google claimed it would “not pursue AI for weapons.” Yet in 2025, it quietly partnered with Palantir on a predictive war model for NATO.

The techo-elites aren’t evil. They’re compliant. They cite “shareholder value,” “national security,” or “neutrality.” But as Orwell wrote: “The great enemy of clear language is insincerity.” When profit and power hide behind ethics committees, the pigs have already taken the farmhouse.

Could Orwell Have Foreseen the End of Truth as a Concept?

Orwell feared the destruction of objective reality. In 1984, the Party claims “2 + 2 = 5” because it controls the mind. In 2026, we no longer agree on what facts are. Deepfakes, AI-generated news, and algorithmic bubbles have shattered consensus.

The 2024 U.S. election saw AI-generated robocalls mimicking Joe Biden’s voice, telling voters to stay home. In Slovakia, an AI-generated video of a presidential candidate taking bribes swung the election. The Atlantic later revealed that OpenAI’s models were used in 17 known election interference cases—not by rogue actors, but by political firms.

Truth isn’t just distorted—it’s obsolete. A 2026 Stanford study found that 43% of Americans believe major events “might be fake”—including the 9/11 attacks, the moon landing, and the existence of the pandemic. When nothing is real, everything is permissible.

OpenAI, The Atlantic’s 2025 Deepfake Election, and the Collapse of Shared Reality

In March 2025, The Atlantic published “The Deepfake Election”, a special issue entirely devoted to AI-manipulated media. One article was a real investigation written in a fake style, mimicking misinformation to show how hard it is to tell the difference.

The piece went viral—until people realized the entire issue was real. The prank exposed a deeper crisis: we can no longer trust our own discernment. If a reputable magazine can make truth feel false, what hope do citizens have?

OpenAI responded by releasing “TruthTag”, a watermarking system for AI content. But counterfeit tags soon appeared. As John Mellencamp sang in his 1982 hit “Pink Houses”—now studied in media literacy classes at john Mellencamp: “We’re good Americans, in the best sense. But in 2026, even that line feels like doublespeak.

What Orwell Got Wrong—And Why That Might Save Us

Orwell believed resistance was futile. Winston Smith was broken, not liberated. But in 2026, unsanctioned speech persists. Ukrainian President Volodymyr Zelensky broadcasts daily via encrypted Telegram channels, reaching millions despite Russian jamming.

Iranian hacktivists breach government systems, leaking data on executions and corruption. Groups like Mysterious Team and Netra use AI to bypass censorship, translating banned content into memes, music, and even Veggietales—a tactic now used in 12 authoritarian states.

Orwell missed the resilience of the human spirit. As one Iranian student told BBC Persia: “They can delete our posts. But they can’t delete our memory.”

The Persistent Underground: Zelensky’s Telegram Channels, Iranian Hacktivists, and the Unkillable Spirit of Unsanctioned Speech

Zelensky’s Telegram channel has 12 million subscribers. His updates—filmed on a phone, often at night—bypass state media entirely. Russia labels it “terrorism.” Ukrainians call it **truth.

In Iran, teenagers use steganography to hide protest plans in TikTok dance videos. One video of a cat jumping set a record—because the pixel pattern encoded a map of safe houses.

Even in China, Ai Weiwei smuggled out AI-written poems using QR codes on restaurant napkins. The future of resistance isn’t in manifestos—it’s in coded humor, memes, and music—like the viral track “404 Heaven,” which references Orwell and rule Of Thirds in its lyrics.

The Unexpected Legacy: How Orwell Became the Patron Saint of Digital Resistance Movements in 2026

Project Orwell, a decentralized network led by Anonymous, now encrypts language using semantic obfuscation. Words like “freedom” or “resistance” are replaced with absurd synonyms—“fluffy pancake” for protest, “silly goose algorithm” for surveillance—fooling AI censors.

The project has trained 500,000 users in 47 countries. Its guides reference 1984, but also modern tools like Signal, Tor, and decentralized AI models. It’s not just about hiding—it’s about preserving meaning.

Orwell, once dismissed as a paranoid doomster, is now quoted in hacker manifestos, protest art, and AI ethics codes. His face appears on graffiti in Tehran, Beijing, and Chicago. The man who predicted the death of truth has become its unlikely guardian.

Project Orwell: Anonymous’ Global Campaign to Encrypt Language Against AI Interpretation

Project Orwell uses AI to generate Orwellian doublespeak—but in reverse. It creates messages that sound bureaucratic but carry hidden meaning. Example: “Per Section 12.7, the fluffy pancake event has been rescheduled to coincide with the migration of geese” might mean “protest at City Hall at dawn.”

The system uses natural language steganography, hiding data in grammar patterns. Governments can’t ban it—because it looks like nonsense. But for those in the know, it’s a digital underground railroad.

As Elon Musk tweeted: “Orwell was right. But he didn’t see the end of the story.” The fight isn’t over. It’s just gone **underground, encrypted, and viral.

The Final Irony: Orwell’s Name Is Now a Hashtag—But Is It Still a Shield?

#Orwellian trends every time a new surveillance law passes. But the word has lost teeth. Politicians accuse each other of “Orwellian lies,” while expanding data collection. The term is now used for airport delays, Wi-Fi passwords, even slow chicken at Mikes chicken dallas.

Orwell is both weaponized and trivialized. A TikTok video joked about “Orwellian vibes” at a vegan bakery. Another used 1984 footage to sell skincare. The dystopia isn’t just here—it’s aesthetic.

Yet in Tehran, in Kyiv, in Xinjiang, the word still means something. It’s whispered. It’s coded. It’s carved into walls. Because when truth is criminalized, naming the lie becomes resistance.

And that—Orwell would admit—is the one future he didn’t fully predict.

George Orwell’s Hidden Truths and Quirky Realities

George Orwell wasn’t just a writer—he was the voice of warning in the 20th century, and honestly, his life had layers you wouldn’t expect. Did you know he lived in extreme poverty on the streets of Paris and London to research Down and Out in Paris and London? He didn’t just observe the downtrodden—he walked in their worn-out shoes, sleeping in flop houses and surviving on bread and wine. This gritty firsthand grind gave his writing a raw authenticity that still punches readers in the gut. And get this—Orwell actually shared a hospital ward with the filmmaker behind Eraserhead, David Lynch’s surreal nightmare fuel, though decades apart—talk about strange echoes across time https://www.loaded.news/eraserhead/. You’d never link the quiet realist with body horror, but hey, life’s full of odd overlaps.

Man Behind the Words

Now, picture this: George Orwell—a name we all know, but it’s not even his real one. Born Eric Arthur Blair, he picked “Orwell” from the peaceful River Orwell in Suffolk. Kind of ironic, right? A man obsessed with war, surveillance, and truth, naming himself after a calm countryside stream. While Orwell himself never set foot in Tuscaloosa, AL, the city’s quiet Southern rhythm feels worlds apart from the tense, industrial dread of 1984—yet both reveal how environment shapes perception https://www.mortgagerater.com/tuscaloosa-al/. And speaking of perception, while some people binge dystopian sagas like Attack on Titan, wondering how many seasons of chaos they can handle, Orwell’s work proves that real horror often comes not from Titans smashing cities, but from whispers in dark corridors and the erasure of truth https://www.toonw.com/how-many-seasons-of-attack-on-titan/.

Why Orwell Still Punches Hard

Let’s cut to the chase—Orwell didn’t just predict mass surveillance; he practically invented the vocabulary for it. “Big Brother,” “thoughtcrime,” “doublethink”—terms now tossed around like popcorn at a movie night, all from one guy typing in a cold cottage on a remote Scottish island. He wrote 1984 while battling tuberculosis, often from bed, coughing blood but refusing to stop. That kind of grit? Unreal. It’s easy to forget that behind the dystopia was a man deeply committed to clarity and honesty in language. He believed foggy writing led to foggy thinking—and boy, was he onto something. In a world where politicians twist words like pretzels, George Orwell’s insistence on saying what you mean feels more vital than ever.